Customer-facing vs internal copilots: how to prioritize LLM use cases

Enterprise leaders exploring generative AI often face a portfolio of possible projects. Should you start with internal AI copilots that boost employee productivity, customer-facing AI tools that engage users, or innovative AI-powered product features that differentiate your offerings?

Each category promises value and carries its own risks.

This guide breaks down the pros and cons of each approach, explains why most companies begin with internal copilots, and shows how AI user analytics can inform a data-driven roadmap.

We’ll also share real-world examples following the common pattern: starting internally, then expanding once usage patterns are clear.

Throughout, we’ll see how measuring LLM adoption metrics (not just system KPIs) and understanding how to measure LLM success can make the difference between pilot projects and enterprise-wide impact.

Read more: Defining adoption benchmarks for enterprise AI: what good looks like at 30, 60 and 90 days

Internal AI copilots: Quick wins in a safe sandbox

Internal AI copilots are AI assistants built for employees, for example, an “internal ChatGPT” that helps HR answer policy questions or a tool for IT support to automate ticket triage. These use cases focus on internal operations, offering faster wins in a controlled environment.

Pros:

Safety and control: Internal copilots can operate on closed data and networks, so they pose lower risk to the business. Early projects can deliver productivity gains and cost savings by automating routine tasks (e.g., summarizing meetings, drafting reports).

Data security: Data stays within company walls, easing compliance worries. If the AI makes mistakes, they are private and less likely to damage the brand or violate regulations. This "safe sandbox" lets teams experiment and learn without public exposure.

Cons:

Limited differentiation: While valuable, internal copilots offer limited long-term differentiation. They improve efficiency but typically don’t create new revenue or customer value directly. Competitors can implement similar internal tools, so any advantage is largely in operational efficiency, not market positioning.

Internal trust risk: An internal AI assistant can still make mistakes, and if it gives employees incorrect information, it can erode trust internally. In fact, some internal copilots have been abandoned not because the models were incapable, but because employees stopped trusting them after wrong or confusing answers. Sustaining adoption requires governance and iteration, even if the stakes are lower than with customers.

Customer-facing AI tools: High potential, high visibility risk

Customer-facing AI tools include chatbots on your website, AI-driven support agents, or AI features in customer apps. These promise direct impact on customer experience and potentially new value streams, but they come with greater exposure.

Pros:

Enhanced user experience: Done well, a customer-facing AI can enhance user satisfaction and engagement. It might provide 24/7 support, personalized recommendations, or faster service at scale.

Competitive edge: This can differentiate your brand and even generate new revenue (for instance, AI advisors upselling products). Being an early mover with a reliable customer-facing AI could yield reputational benefits and learning advantages.

Cons:

Reputation risk: A bad answer or a hallucinated response given to a customer can harm your brand’s reputation instantly. External use cases must meet a higher bar for accuracy, tone, and compliance. Mistakes in public can go viral or invite regulatory scrutiny.

Trust barriers: Many companies remain cautious here; many have AI chatbots summarizing internal data, but very few allow AI to interact directly with paying customers. Without strong user feedback loops, you might not know if the AI is actually helping customers or quietly frustrating them. This lack of confidence often causes organizations to delay customer-facing projects until the technology (and their trust in it) matures.

AI product innovation: Long-term upside, experimental path

Beyond specific assistants, some companies embed LLM capabilities into their core products or create entirely new AI-driven offerings. For example, adding an AI writing assistant into a software suite, or an AI analytics feature in a SaaS product. These product innovations aim for long-term differentiation but are the most experimental.

Pros:

Game-changing features: AI-powered product features can become true game-changers if they align with customer needs. They offer the chance to leapfrog competitors by delivering capabilities that were not possible before (e.g., natural language interfaces, intelligent automation within a product).

Strategic advantage: Over time, such features can strengthen customer loyalty and open new markets. The upside here is strategic differentiation: successful AI innovations embedded in products might define the next generation of offerings in your industry.

Cons:

Uncertainty and data needs: The path to that upside is uncertain and data-intensive. It’s hard to scale an experimental feature without real usage data guiding its development. Until you have real users interacting with the AI feature, you won’t know which functions hit the mark and which miss.

Experimental risk: This makes it challenging to justify large investments or rollouts - the innovation might sound exciting, but will it deliver value and be adopted widely? Hallucinations or errors in a core product feature can also undermine the product’s overall reliability.

Why enterprises start with internal copilots first

Given the trade-offs, it’s no surprise that most enterprises kick off their AI journey with internal copilots. Simply put, internal use cases feel safer and easier to control. Companies naturally begin AI projects "behind the scenes" where any mistakes stay in-house.

Another reason is quick, tangible wins. An internal bot that automates IT support tickets or drafts HR reports can show immediate productivity gains. This helps build the business case for AI by delivering value early, even if it’s limited to cost savings or efficiency. It’s easier to get stakeholder buy-in for low-risk improvements - "let’s save our employees 20% of their time with an AI copilot" - than for a high-profile customer experiment.

Finally, starting internal allows teams to learn and iterate before facing the public. It creates a testing ground to understand the technology’s limitations, gather feedback from employees, and establish governance (e.g., prompt guidelines, approval workflows) under safer conditions. In fact, if you cannot be sure your AI copilot is reliably helping employees, how can you trust it with customers?

The challenge of scaling beyond internal projects

If internal AI pilots are going well, the next step is scaling up and reaching outward. This is where many enterprises hit obstacles. Moving to customer-facing or broader product AI use means confronting a new set of challenges around user behavior, trust, and performance at scale.

User drop-off and adoption friction: It’s common to see strong initial curiosity for a new AI tool, followed by a drop-off in usage. Employees or customers might try a copilot once or twice, but if it doesn’t deliver real value, they won’t come back. Most "failures" aren’t system crashes; they’re sessions where the user gave up.

Hallucinations and trust erosion: Every enterprise AI team worries about hallucinations. In internal settings, a made-up answer might waste someone’s time; in customer settings, it can be a trust disaster. A single incorrect financial advice from an AI assistant, for instance, could lose a bank customer forever.

Measuring success is hard: How do you measure LLM success in a business sense? Traditional KPIs—API throughput, latency, error rates—won’t tell you if users are happy or if the solution is effective. Many organizations lack insight into whether users achieve their goals or get frustrated. This measurement gap makes it difficult to iterate and improve because the team isn’t sure what to fix or how to prove progress.

Using user analytics to drive LLM success

How can organizations overcome those adoption and scaling challenges? The answer lies in complementing technical monitoring with user-centric analytics. Rather than guessing or relying on sparse feedback, leading teams instrument their AI solutions to capture rich data on usage.

This emerging practice, called AI user analytics, makes prioritization decisions grounded in real data instead of hunches. By tracking real user behavior, you can measure adoption and success for each use case, pinpoint where friction arises, and base roadmap decisions on evidence.

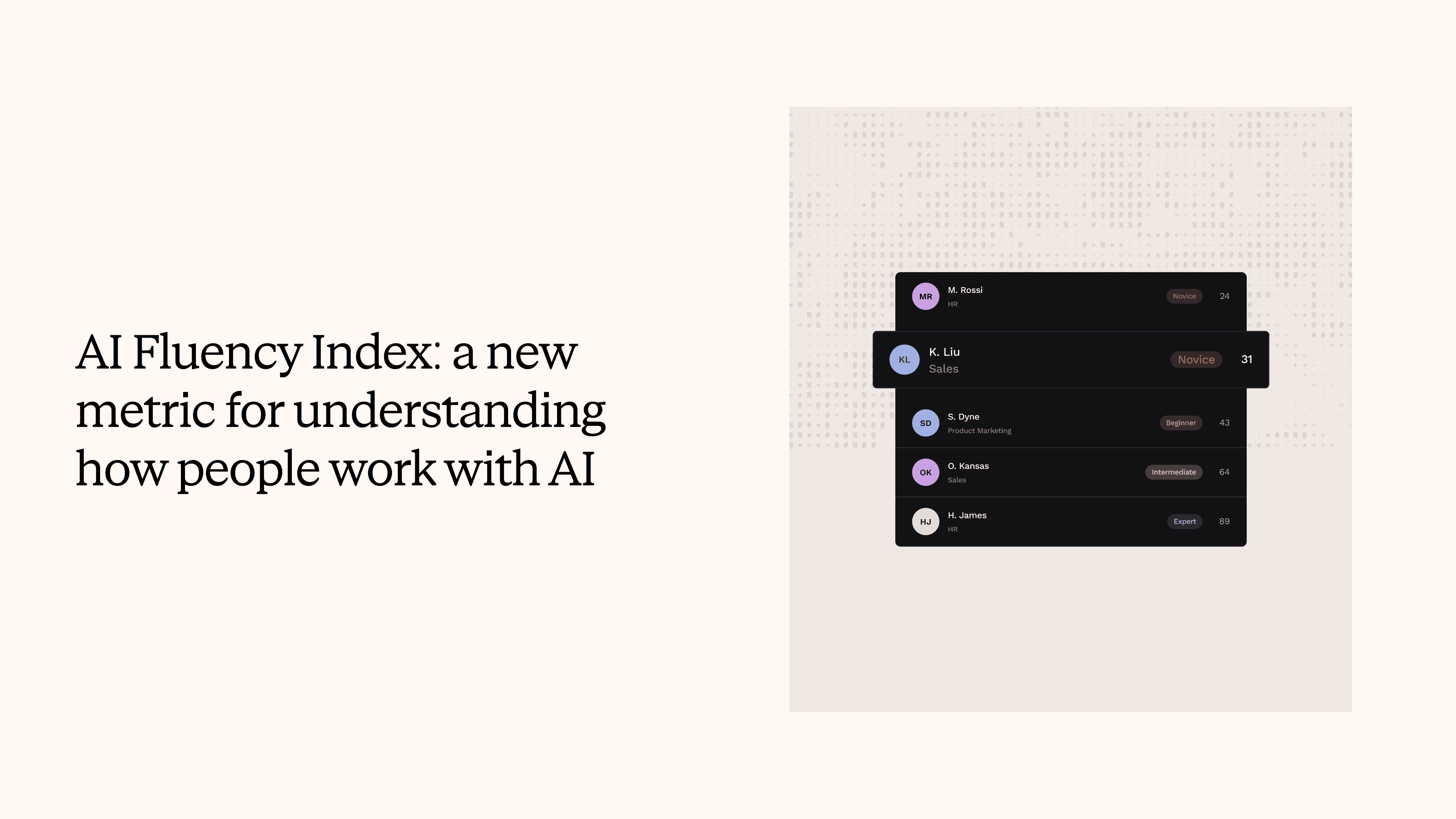

Measure true adoption: User analytics goes beyond vanity metrics. For each copilot or AI feature, you can monitor how many people actually engage, how often, and what results they get. Key LLM adoption metrics include things like conversation completion rate and intent success rate.

Identify friction and drop-off patterns: Analytics can spotlight exactly where and when users hit trouble. For instance, you might discover that users frequently rephrase their queries multiple times in a certain scenario, a sign the AI isn’t understanding their intent initially.

Detect moments of satisfaction or breakdown: Conversation analytics tries to infer user sentiment and success from the interaction flow. If a user says "Thanks, that's helpful," that’s a clear signal of satisfaction. If they grow frustrated and quit, that implicit feedback suggests the AI wasn’t helping.

Feed decisions with real user needs: The biggest benefit is the strategic clarity user analytics provides. When you know which use cases are thriving and which are floundering, you can make informed choices on where to invest. You can decide what to invest in or cut based on actual user signals, not just initial assumptions.

Introducing Nebuly: User analytics for generative AI products

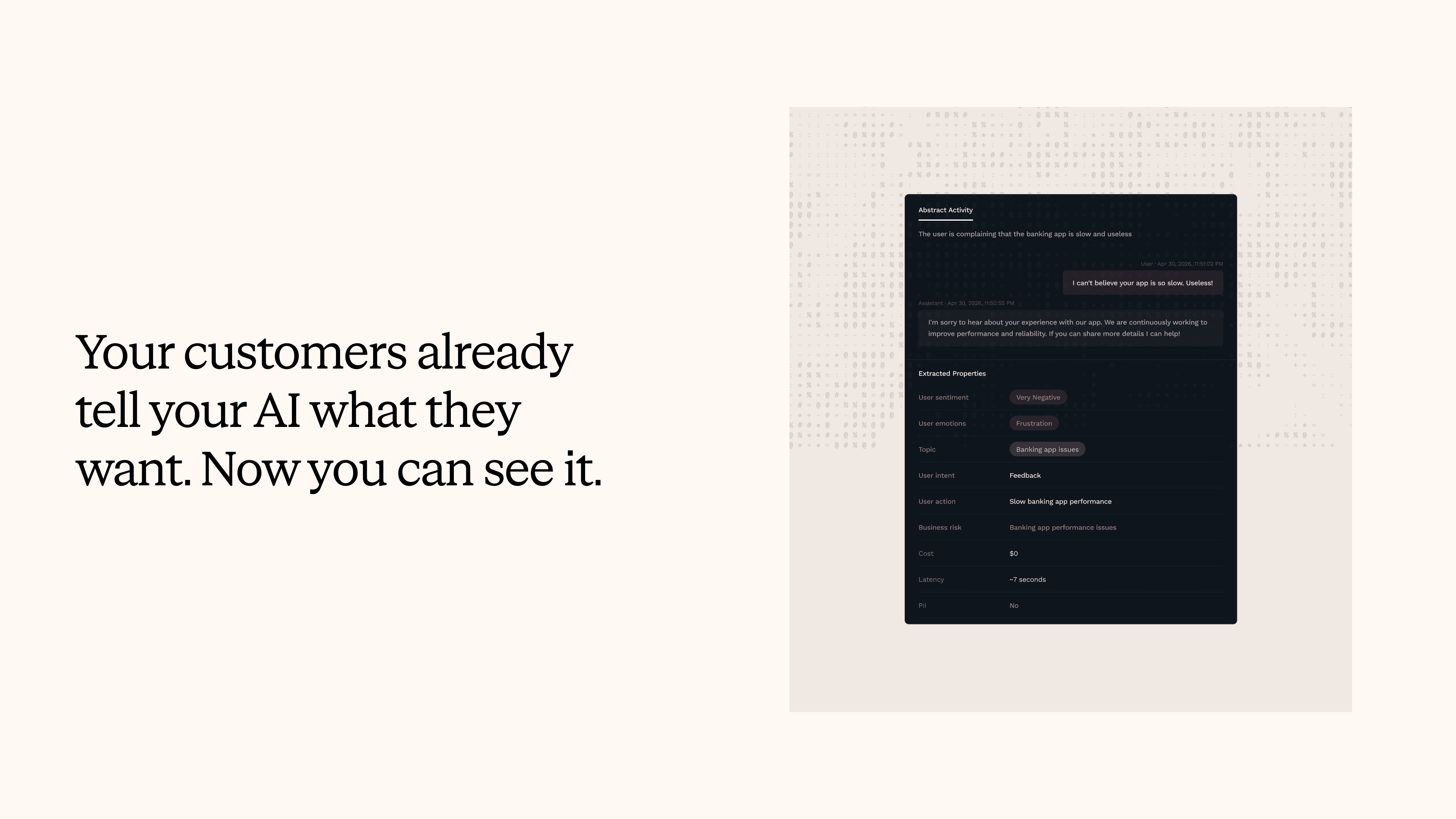

Making all the above possible requires the right tooling. Nebuly is a platform for GenAI user analytics, designed to give teams that "human layer" insight that technical logs don’t show. Think of it as "Google Analytics for AI conversations". It automatically collects and analyzes every interaction your users or employees have with your LLM-powered tools.

Unlike traditional observability tools, Nebuly focuses on user-centric metrics and insights. It tracks things like user intents and whether they were fulfilled, where users had to retry or gave up, and overall satisfaction trends. It correlates conversation patterns with outcomes, helping you answer questions like, "What percentage of our chatbot sessions end in success?"

Crucially, Nebuly helps you pinpoint friction and build trust. It can flag when an AI response likely confused the user, or alert you to compliance risks. All of this is presented in user-friendly dashboards and reports that AI product teams, business stakeholders, and compliance officers alike can use.

By having this kind of analytics in place, enterprises gain the confidence to expand their AI projects. Nebuly's customers often start with one pilot and, after seeing the rich user data, are able to iterate faster and prove ROI to stakeholders. In effect, Nebuly provides the AI usage tracking tools and analysis that turn anecdotal usage into hard metrics.

Real-world adoption patterns: From internal wins to wider rollout

To show how these principles work in practice, here are two examples based on real patterns we’ve observed: one in banking and one in manufacturing. Both follow the same journey: start with an internal copilot, learn from usage, then expand once the data supports it.

Global Bank:

A multinational bank launched a generative AI assistant for its compliance team and call center agents. The aim was internal efficiency: faster access to information. Usage was solid, but leaders were hesitant to expand. They worried about errors on customer calls and risks of exposing sensitive data.

With Nebuly’s analytics, the bank’s transformation team could track every conversation topic in real time, spot hallucinations or potential PII leaks, and see where employees lost trust. For example, repeated rephrases of a specific regulation flagged unclear answers. They also found outdated policy details, which were quickly corrected in the knowledge base.

Armed with this data and audit logs for compliance, the bank improved accuracy and showed stakeholders the assistant was reliable. Only then did they launch a small customer-facing pilot: a chatbot giving retail customers basic account info. Because they had evidence of accuracy—and monitoring in place—they moved forward with confidence. This phased approach, internal first and refined with analytics, enabled responsible expansion.

Industrial Manufacturer:

An international manufacturer deployed dozens of internal AI copilots across engineering, support, and supply chain teams. These answered questions on technical specs, assembly troubleshooting, and more. The challenge was visibility: with so many tools, which ones worked? Surveys and anecdotal feedback were too slow and fragmented.

By deploying Nebuly in a self-hosted environment, the company gained a unified view of usage. Analytics showed, for example, that the support copilot resolved ~75% of queries without escalation, while the engineering assistant had low adoption and high drop-off on certain topics. With this clarity, they prioritized improvements—adding training data to the engineering copilot and integrating the successful support bot more deeply into workflows.

As adoption grew, they quantified that automated analytics gave them 100× more feedback than manual methods. With proven internal results, they then explored an AI-powered customer portal built on the same knowledge base. In other words, once internal usage reached critical mass and they had evidence of reliability, extending the benefits externally became far less risky.

Conclusion:

Enterprise transformation leaders have no shortage of promising AI ideas – the art is in choosing the right battles and winning them.

Internal AI copilots, customer-facing tools, and AI product innovations all have merit. The prudent path for most organizations is to get some quick wins internally (where AI can safely boost productivity), but to do so with an eye toward the bigger picture.

That means instrumenting those early projects with user analytics so you truly understand what drives success or failure. With that foundation, you can iterate rapidly, build trust in the technology, and gradually take on higher-impact (and higher-risk) applications like customer-facing AI and bold product features.

By prioritizing use cases based on user-driven evidence, you ensure that resources go to the AI initiatives that will deliver real value. It’s not about internal vs external as a one-time choice – it’s about sequence and feedback loops.

Start where you can learn the fastest and do so in a controlled way, then let the data guide you outward. Generative AI’s long-term upside for your enterprise will come not from one-off pilots, but from a cycle of deploying, measuring, and improving. In that journey, having the right analytics – a “user intelligence” layer – is what turns guesswork into strategy.

As Nebuly’s experience shows, when you measure what matters (user intent, adoption, satisfaction), you gain the insight to confidently scale what works and fix what doesn’t.

Businesses that use this approach are moving beyond the first wave of internal copilots and into the era of truly amazing AI products. These products are backed by data, driven by user needs, and delivered with confidence.

The question isn’t which LLM use case is best in general; it’s which one is proving best for your organization, as shown by your users. With the right visibility, the answer becomes clear.

See how this works in practice: book a demo with Nebuly to explore your own LLM user analytics.

Stay up to date on what we're learning, building, and seeing as enterprise teams deploy and measure AI agents in production.