Large language models are introducing a new interface for engaging with products.

No more point and click, but dynamic interactions in natural language.

This paradigm shift creates a radically new way for AI teams and product leaders to think about product analytics, moving from understanding the user journey through clicks to understanding the flow of the conversation between the user and the LLM.

What’s the difference between product and LLM analytics

Traditional product analytics tools (Mixpanel, Amplitude, etc) fall short when it comes to uncovering insights from users of large language models. User interaction with LLM-based products largely revolves around typing text and pressing Enter, then typing more text and hitting Enter...over and over again. Traditional product analytics are unable to capture the essence of what users are typing, and thus fail to answer the most important questions that arise from LLM products:

- What is the users’ primary intent when interacting with LLMs?

- What are the most common follow-up questions or actions users take after receiving a response from the LLM?

- Which are the most relevant topics discussed by the users? Are they satisfied with the answers?

- Are there situations where users seem confused or dissatisfied with the LLM's responses?

- Are there opportunities to improve the LLM's responses based on user feedback and interactions?

How does user analytics for LLMs work

The above questions require a different perspective on analytics to make sense of the massive amount of user interactions with the LLMs.

LLM user analytics requires a robust framework that covers the following 4 steps:

- Understand the actions within a user-LLM interaction

- Understand the properties of an interaction

- Extract the flow of a conversation

- Detect user engagement VS frustration

Understand the actions within a user-LLM interaction

We define user-LLM interaction the dynamic exchange between the user's prompt and the corresponding output of the LLM.

Each interaction involves specific user actions that can be classified into the following types:

- Active User Actions (AUA): Active User Actions refer to the explicit tasks that LLM users make. For instance, when a user explicitly asks the LLM to provide an explanation or to suggest an alternative response, users are engaging in an Active User Action. It's a clear directive from the user for a specific kind of response.

- Passive User Actions (PUA): Passive User Actions encompass the subtle, implicit behaviors users exhibit without directly instructing the LLM. Examples include copying the LLM's previous response and pasting it into a new prompt or subtly adding context to a query before posing it. These actions give clues about user intentions or needs without explicit commands.

- Assistant Actions (AA): Think of Assistant Actions as the output that LLM produces in response to a user prompt. It doesn't consider all the steps or tasks in the LLM chain, but focuses only on the final action that provides the answer to the user.

Understand the properties of an interaction

Every user-LLM interaction is rich in nuanced properties that define the specifics of an interaction.

Some examples of relevant properties that are useful to extract are:

- Format, style, verbosity and language: The Format indicates whether the interaction resembles an article, blog, paper, or another content type. The Style delves deeper, highlighting the tone, be it formal, humorous, or another mood. Verbosity measures the length of the generation, while Language indicates the output language, such as English or Spanish.

- Topic: What's the main theme or subject of the conversation?

- Sources: If the LLM pulls information from external sources, as in a RAG system, which are the main ones?

Extract the flow of a Conversation

By extracting the actions and inherent properties of each interaction, it is possible to map out the entire conversation's trajectory. This means going beyond isolated interactions and visualizing the holistic 'flow' — a sequential map detailing the steps users take to reach their desired outcome. Understanding this flow provides valuable insights into user behavior, identifying patterns, pain points, and moments of satisfaction.

Detect user engagement versus frustration

Drawing from our understanding of i) actions ii) properties of interactions, and iii) the conversation flow, we are able to differentiate signs of genuine engagement from indicators of growing frustration.

Engagement may manifest itself as a series of enthusiastic follow-ups, thorough exploration of subtopics, or frequent use of the LLM's responses through actions such as copying and pasting. On the flip side, repetitive inquiries on a similar topic, slight rephrasing of the same questions without moving on to new ones, or abrupt shifts in topic can be tell-tale signs of growing frustration.

Identifying the root of such frustration is critical to the continued improvement of LLM interactions. Is the user dissatisfied because of the model's inherent knowledge limitations? Perhaps it's the LLM's occasional inability to match a user's desired tone or style. Other potential triggers could be verbosity, a perceived lack of creativity, or output that doesn't quite match with the user's expectations.

Summary

In the years to come billions of people will interact with LLMs every day. Traditional product analytics tools fall short when it comes to uncovering insights from users of large language models.

In this article we reviewed a framework for LLM user analytics including an explanation of the main components - interactions, actions, properties and conversation flow - that are needed to understand the satisfaction and frustration of the users.

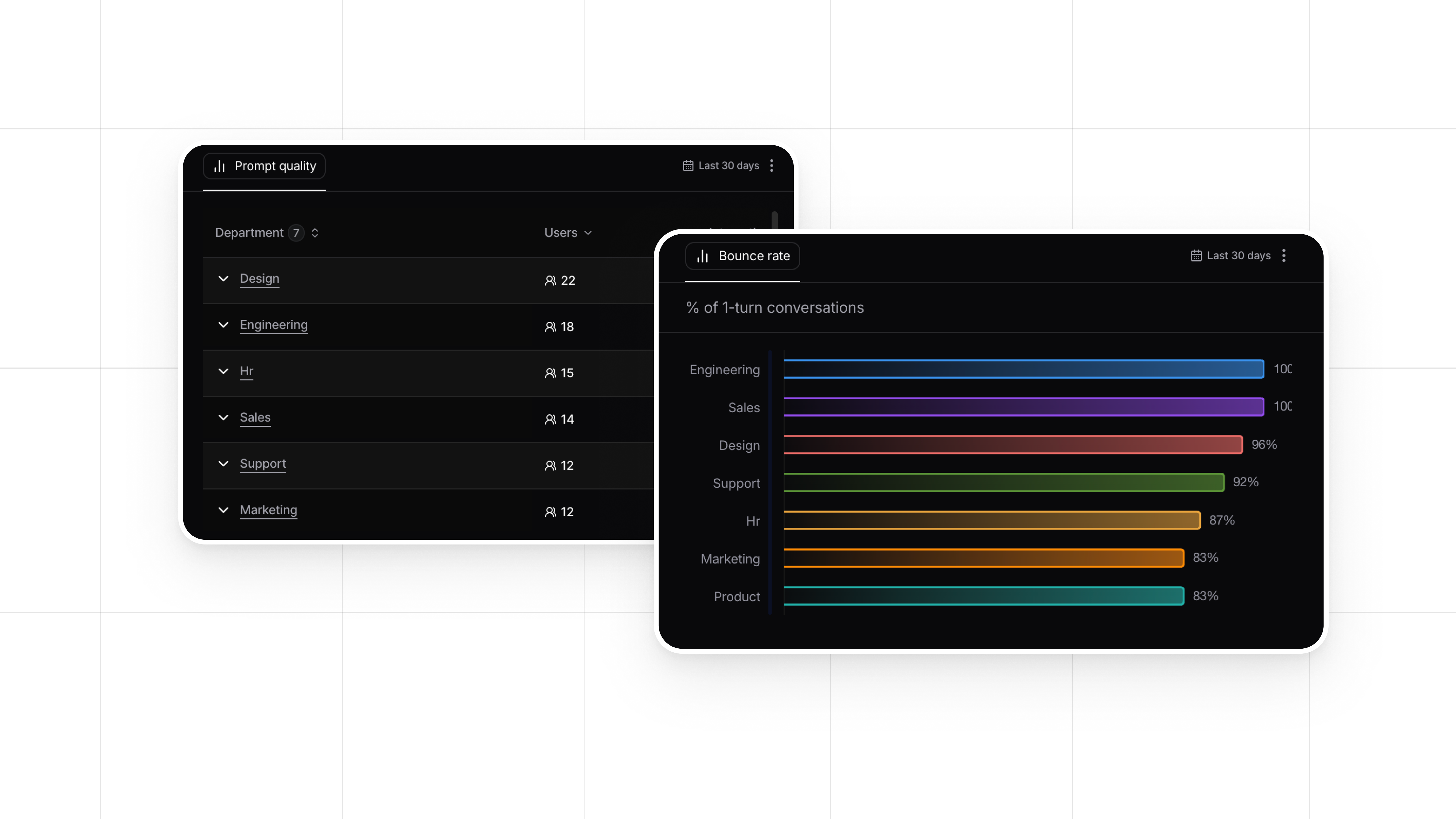

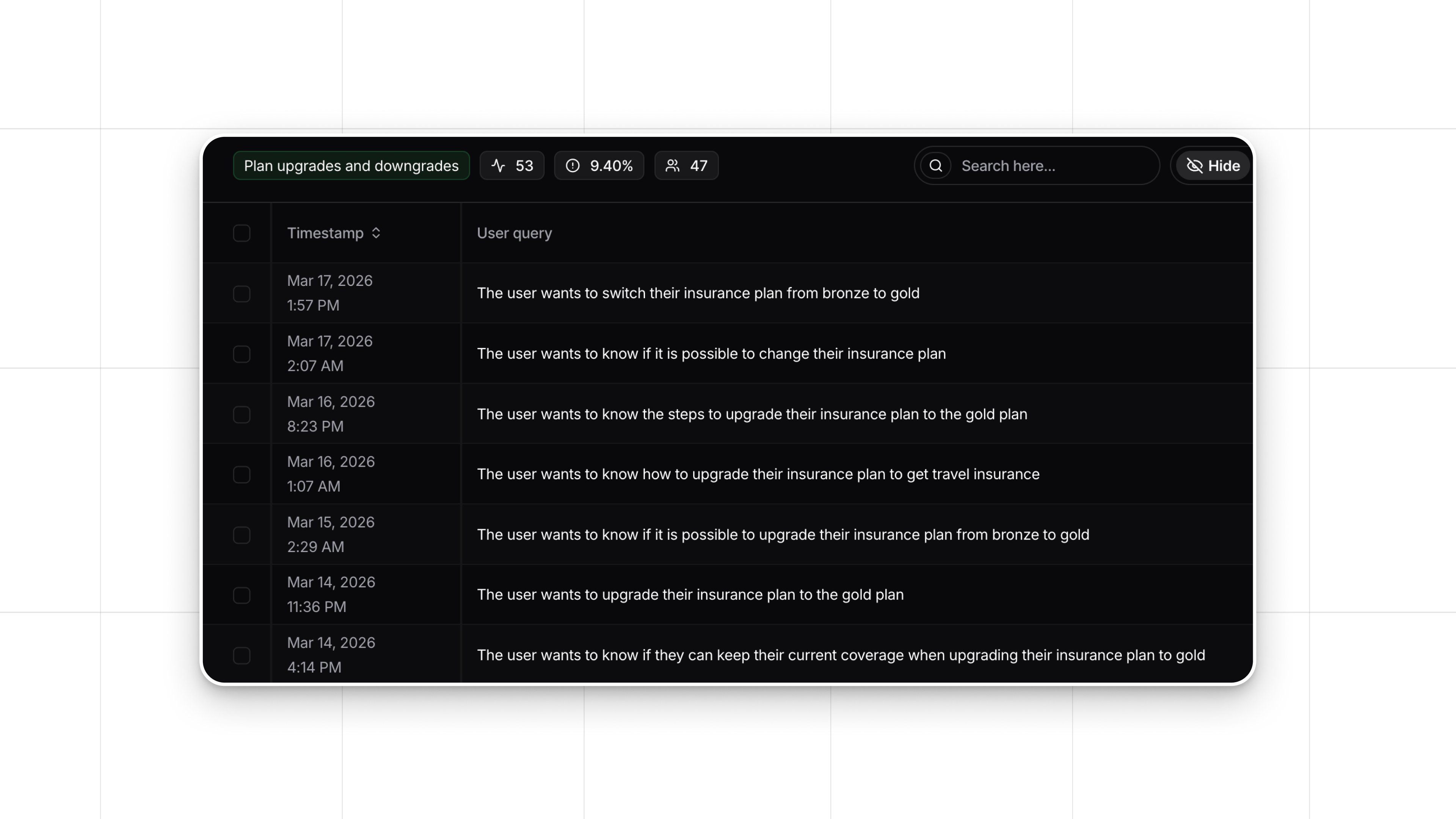

Luckily, you don't have to do this manually, but you can use an LLM User Analytics platform like Nebuly. Nebuly takes care of all of these considerations automatically and gives you instant visibility into your LLMs user data.