Why multi-agent deployments make task-level visibility an enterprise priority

TLDR

Enterprises are moving from single AI agents to multi-agent systems quickly. According to Salesforce's 2026 Connectivity Benchmark Report, organizations run an average of 12 agents today, with adoption projected to grow 67% within two years. When users interact across multiple agents, new categories of behavioral signal emerge that do not exist in single-agent environments. Users develop agent preferences, express business-level concerns across separate conversations, and carry frustration from one agent interaction into another. These patterns are measurable, meaningful for the business, and largely uncaptured today. This article explains what those emergent signals look like, why they matter for retention, productivity, and cost savings, and how enterprises can start measuring them as multi-agent deployments scale.

How fast are enterprises scaling to multi-agent deployments?

Fast. According to Salesforce’s 2026 Connectivity Benchmark Report, based on a survey of 1,050 enterprise IT leaders, organizations currently run an average of 12 AI agents. That number is projected to grow 67% within two years. 83% of organizations report that most or all teams and functions have already adopted agents in some form.

The KPMG Q4 2025 AI Pulse Survey tells a similar story from the infrastructure side. 65% of enterprise leaders cite agentic system complexity as their top barrier to scaling, for two consecutive quarters. Agent deployment more than doubled over the course of 2025, from 11% of organizations in Q1 to 26% in Q4.

Most of the enterprise investment around this scaling has gone into orchestration, governance, and security. Those are the right priorities for system-level coordination. What has received less attention is what happens to user behavior data when agents multiply.

What new user behavior patterns emerge in multi-agent environments?

In a single-agent deployment, user behavior is contained within one system. Every interaction, every signal, every pattern lives in one place. When an enterprise scales to multiple agents, user behavior starts to distribute across them. New patterns emerge that are specific to multi-agent environments.

Users develop agent preferences. When multiple agents are available, users gravitate toward specific agents for specific task types. They may use one agent for billing questions and a different one for technical issues, even if both agents are capable of handling either. They may try one agent, get an unsatisfying response, and switch to another. These preference patterns reveal which agents users trust for which kinds of work. In a single-agent environment, this signal does not exist.

Business signals fragment across conversations. A user who raises a pricing concern with one agent and asks about cancellation policies through a different agent is expressing a retention risk. In a single-agent environment, both signals appear in the same conversation thread. In a multi-agent environment, they are split across two separate interactions with no inherent connection between them. The signal is there. It is distributed across systems that have no shared view of the user.

Friction cascades between agents. When one agent handles a task poorly, the user carries that experience into their next interaction with a different agent. The second conversation is colored by the first. But there is no data linking the two interactions. What looks like an unusually difficult conversation with Agent B may have originated as a poor experience with Agent A minutes earlier.

Task resolution becomes ambiguous across handoffs. When a user’s journey spans multiple agents (billing to support to onboarding), each agent may log its own interaction as complete. The user may have received three technically correct responses and still left without their underlying concern being addressed. In a single-agent deployment, this shows up as one unresolved interaction. In a multi-agent deployment, it can appear as three successful ones.

Why do these patterns matter for the business?

These emergent patterns carry direct business implications. They are not abstract behavioral curiosities. They connect to retention, operational efficiency, and resource allocation.

Fragmented signals delay intervention. When a retention risk is expressed across two separate agent conversations, the window for proactive outreach narrows. Customer success teams working from single-agent data will see two unremarkable interactions. The composite picture, which tells a different story, is only visible when signals are aggregated across agents.

Agent preference patterns reveal trust distribution. If users consistently avoid a specific agent for a specific task type, that is a measurable signal about where the deployment is underperforming. It also identifies where investment in improving agent handling will have the most impact. Without cross-agent visibility, these patterns are invisible and the enterprise is allocating resources without knowing where the gaps are.

Redundant handling inflates costs. When multiple agents handle overlapping task categories independently, the enterprise may be maintaining three separate approaches to the same type of interaction with no comparative view of which one produces better outcomes. Identifying this redundancy requires visibility across agents, not within each one individually.

Cascading friction distorts performance measurement. If Agent B’s performance metrics look worse because users arrive frustrated from a poor experience with Agent A, optimizing Agent B in isolation will not solve the problem. The root cause is upstream. Seeing the cascade requires connecting user journeys across agents.

How can enterprises start measuring cross-agent user behavior?

Measuring user behavior across multi-agent environments requires extending what enterprises already do for single-agent analytics. The foundational work of categorizing tasks, defining success criteria, and identifying behavioral signals remains the same. Multi-agent environments add three requirements on top.

A shared task taxonomy across agents. Every agent in the system needs to map to a common set of task categories. When billing inquiries are handled by a customer service agent, a specialized billing agent, and a self-service FAQ agent, all three need to be measured against the same definition of resolution. Without a shared taxonomy, each agent’s metrics exist in isolation.

User journey stitching across agents. When a user interacts with multiple agents around a single concern, those interactions need to connect into a single view. This means identifying that the same user asked about pricing in one conversation and cancellation in another, and surfacing that sequence as a coherent signal rather than two unrelated data points.

Aggregated signal detection. Behavioral signals (competitive mentions, repeated task attempts, abandonment patterns, escalation requests) need to aggregate across agents into a unified view. A signal that appears once in one agent conversation may be unremarkable. The same signal appearing across three agent conversations from the same user in the same week tells a different story.

How does this scale as agent count grows?

Salesforce’s data shows enterprises running 12 agents on average today, with 67% growth projected. KPMG’s survey found that leaders are preparing to scale agent systems enterprise-wide, investing in infrastructure and governance to run multi-agent systems reliably.

As agent count grows, the volume of cross-agent user behavior grows faster. With 5 agents, a user might interact with 2 in a single session. With 20 agents, the number of possible cross-agent journeys increases significantly. The behavioral signals that emerge across those journeys become richer and more complex.

Enterprises that build cross-agent measurement early will have a compounding advantage. They will understand where users are getting value across their agent ecosystem, where friction is concentrating, and where business-level signals are surfacing. Enterprises that wait will face the same challenge later with more agents and more data to untangle.

The Salesforce report found that 86% of IT leaders are concerned that agents will introduce more complexity than value without proper integration. That concern extends beyond system integration. It applies equally to understanding what users are experiencing across those systems.

How Nebuly measures user behavior across multi-agent deployments

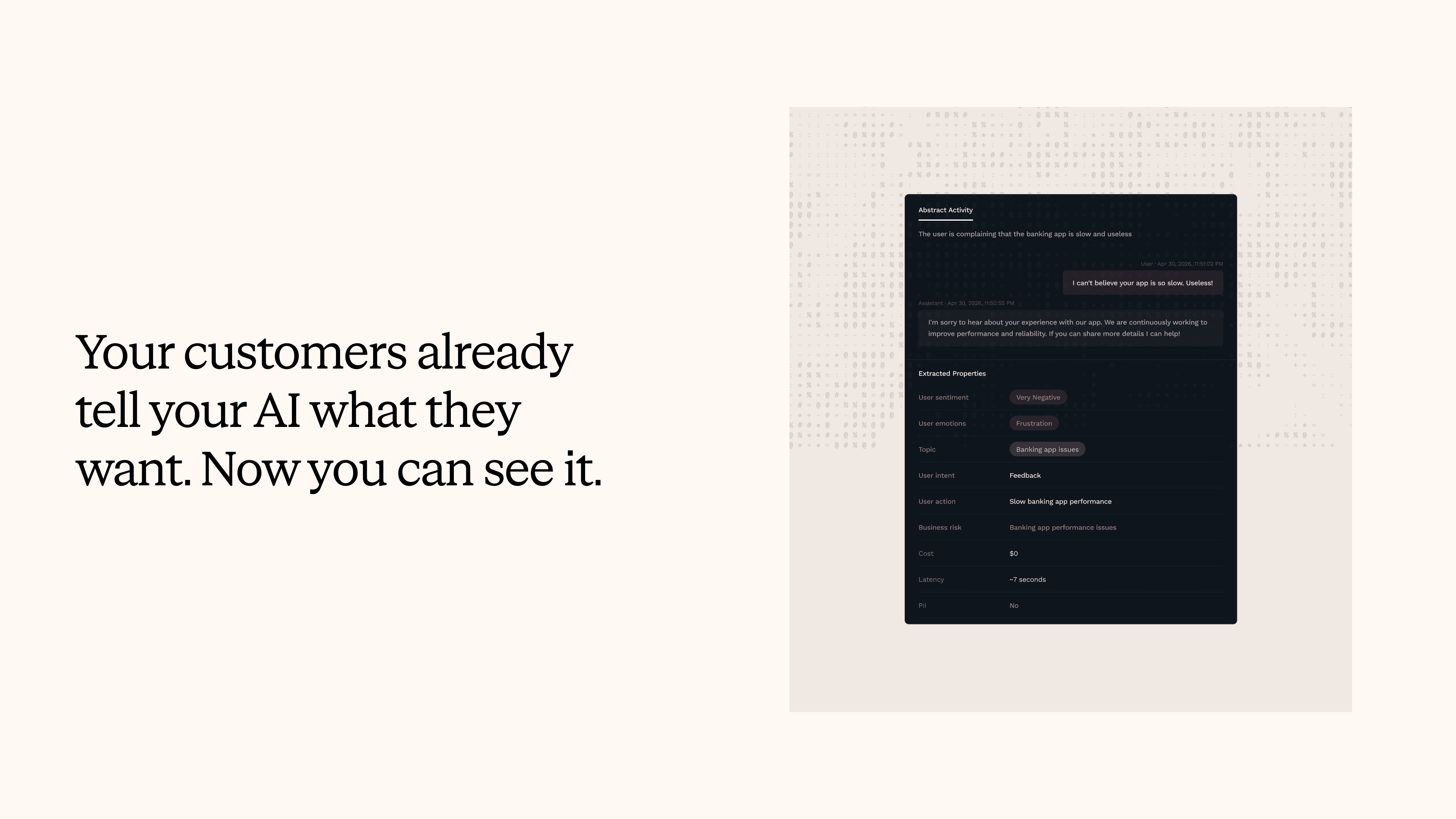

Nebuly is the user analytics platform for AI agents. It measures task-level success and failure patterns by analyzing conversation content, identifying behavioral signals that users express during interactions, and connecting those signals to business outcomes like retention, productivity, and cost savings.

In single-agent deployments, Nebuly captures signals like competitive concerns, repeated task attempts, abandonment patterns, and escalation requests from conversation data without relying on explicit user feedback. These signals connect directly to measurable business impact.

As enterprises scale to multi-agent environments, Nebuly extends the same analytical framework across agents. It provides a shared task taxonomy so performance is comparable across agents handling similar work, user journey stitching so interactions across agents connect into a single view, and aggregated signal detection so behavioral patterns distributed across separate conversations surface as unified, actionable insights.

For enterprises scaling from experimentation to production with multiple agents, the user behavior signals emerging across those agents are a new source of business intelligence. Nebuly is built to capture them.

FAQs

Why can’t I use my existing analytics tools to measure AI agent performance?

How do you measure AI agent adoption across a large enterprise?

What are AI Upsell Signals?

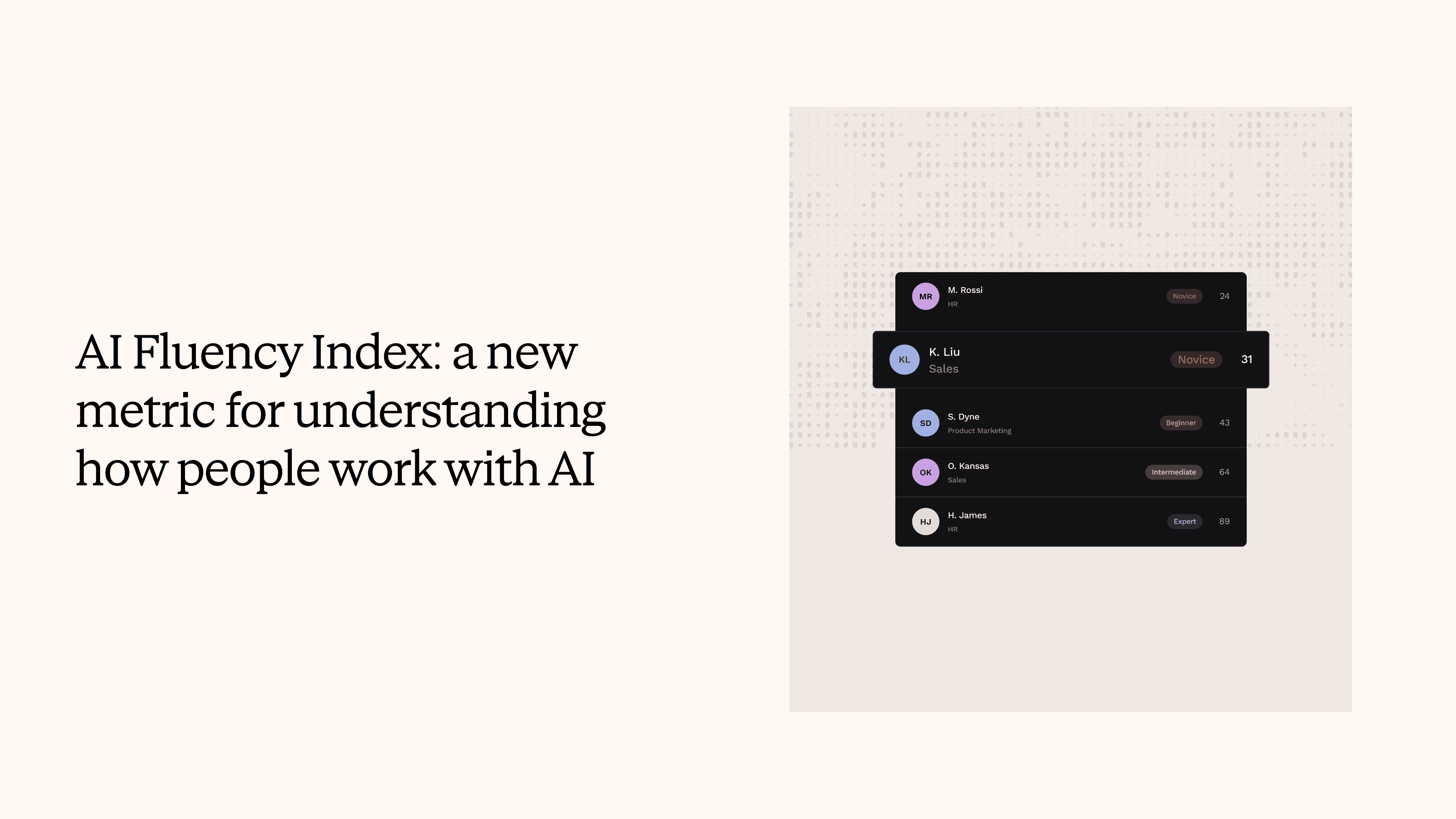

What is an AI Fluency Index?

What are AI Churn Signals?

How do I know if my customer-facing AI agent is actually working?

What metrics should I track for an employee-facing AI copilot?

How do you measure the ROI of an AI agent?

Stay up to date on what we're learning, building, and seeing as enterprise teams deploy and measure AI agents in production.