2026 is the year AI teams have to show the math.

For the past two years, enterprises have been deploying AI agents. Employee copilots, customer service bots, internal knowledge assistants, outbound sales agents. The deployment question has largely been answered. The new question is harder.

Can you prove it’s working?

Not in the abstract. Not with session counts or message volumes. Can you show which tasks are being completed, which are failing, and what the business impact is in either direction?

Most organizations can’t. They know the system is running. They know people are logging in. But the connection between usage and value is missing. And in 2026, with AI budgets under scrutiny across every major industry, that’s the connection that matters most.

The framework below is designed for that measurement challenge. It breaks the “is it working?” question into five sequential questions, applied to two deployment contexts: agents that serve employees and agents that serve customers. The logic is the same. What you’re measuring at the end is different.

Part 1: Measuring employee-facing AI agents

Employee AI agents are built for one purpose: productivity. But productivity is notoriously hard to measure in a system built around conversations. Here’s the sequence that makes it tractable.

1. Who is using it and who isn’t?

Before anything else, you need to know your actual adoption picture. Not just total users, but which teams, departments, and geographies are genuinely engaging versus those who tried it once and moved on.

This matters because adoption gaps are almost never random. A department that isn’t using your AI is usually telling you something: the interface doesn’t fit their workflow, the training was insufficient, or the agent doesn’t serve their actual tasks well. Adoption is the baseline for everything that follows.

2. What are they using it for?

Every employee uses an AI agent differently. A sales rep and a finance analyst working inside the same copilot will use it in completely distinct ways, and the value they get will be completely distinct too.

This is where AI Use Cases measurement becomes critical. It means surfacing the real questions, tasks, and workflows employees bring to AI agents, rather than assuming the deployment matches the design. Understanding which task types are driving real usage tells you two things: where value is already concentrating, and where the agent is being ignored for tasks it was designed to handle. Both are signals worth acting on.

3. Are they succeeding?

Usage doesn’t equal value. An employee who asks the agent for help and then solves the problem themselves in a spreadsheet used the agent without getting any benefit from it.

AI Success measures something harder than engagement: are people completing what they came to do? This is where you find out which use cases are producing outcomes and which ones are producing frustration. It’s also where you find out what needs to improve. When tracked at the task level, patterns emerge quickly. Certain task types show high completion rates while others consistently stall.

4. What is the actual business benefit?

Once you know what employees are successfully accomplishing with the agent, you can connect those completions to business impact. Time saved on a specific task. Volume of requests resolved without human escalation. Reduction in repeated questions to HR or IT.

AI ROI is the practice of mapping completed tasks to time saved and dollar value. It turns task completion data into a business case. The closer you can get to “employees in department X are saving Y hours per week on task Z,” the more defensible the budget conversation becomes.

5. How fluent is your organization?

Not everyone uses AI equally well, and that gap tends to widen over time without intervention. Some employees will find sophisticated uses and get significant value. Others will use the agent for basic queries and conclude it’s not that useful.

The AI Fluency Index measures how effectively different functions, departments, and geographies use AI agents. It tells you where training moves the needle. It also tells you where your most effective users are, so you can learn from what they’re doing and spread it. An organization may report 70% AI adoption, but if the top 15% of users are generating 80% of the value, the investment is underperforming relative to its potential. Fluency measurement identifies exactly where intervention would produce the largest improvement in outcomes.

Part 2: Measuring customer-facing AI agents

Customer-facing agents interact with the people who pay. The stakes are different, and so is what you’re trying to measure. The same five-question sequence applies, but the outcomes at the end are retention risk and revenue opportunity rather than ROI and fluency.

1. Who is engaging with the agent?

Are customers actually using the agent, or bypassing it to call support? Which customer segments engage most? Which ones bounce immediately?

This is your adoption baseline, same as with employee agents. But here, low adoption often has a faster business consequence. Customers who bypass the agent and go straight to human support are more expensive to serve, and customers who can’t get what they need from the agent churn faster.

2. What are they asking about?

Conversation analytics at scale surfaces something traditional survey or ticket data can’t: the real distribution of what customers actually want.

Topic Intelligence is the practice of analyzing every conversation between customers and an AI agent to surface recurring request types, friction points, and unmet needs. You’ll find patterns you didn’t design for, friction that shows up across thousands of conversations before it appears in any support report, and unmet needs that represent both product improvement opportunities and potential new features. This is structured intelligence that product and business teams can act on directly.

3. Is the agent delivering value?

Is the agent answering what customers are asking, or deflecting? Where are the knowledge gaps? What topics consistently produce failed or incomplete responses?

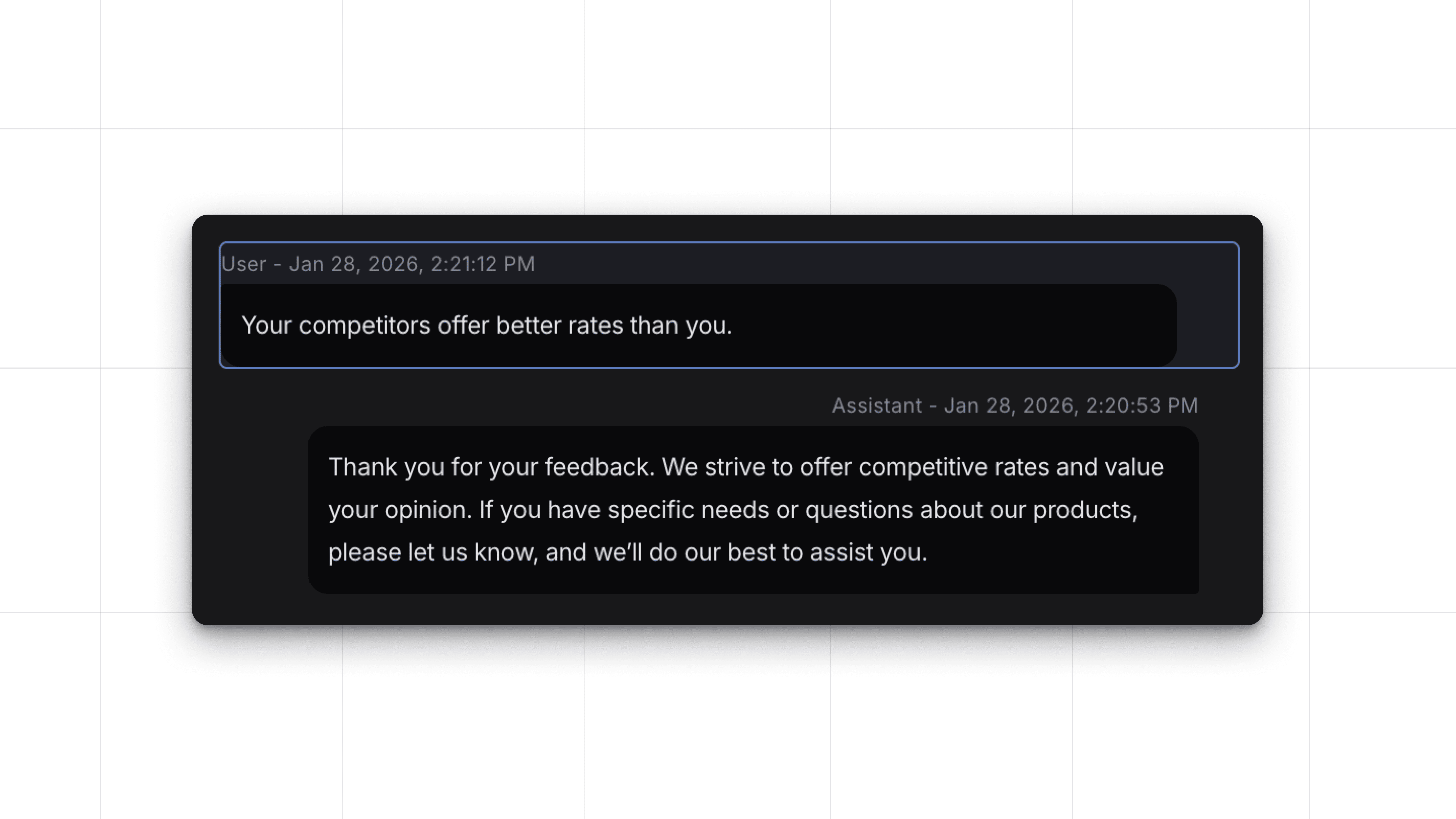

AI Success in a customer-facing context tracks whether the agent actually resolves what customers are looking for. It surfaces knowledge gaps, unanswered questions, and failure points by task category. The conversations that end in frustration or escalation are telling you specifically where the agent needs to improve.

4. Are there signals that customers are at risk?

Conversations contain churn signals long before they show up in retention data. Customers expressing frustration with pricing, comparing your product to a competitor, or repeatedly failing to resolve the same issue are showing you something that your CRM or CSAT score won’t capture until it’s too late.

AI Churn Signals refers to the detection of these retention risk indicators within agent conversations. Most unhappy customers leave without complaining through official channels. The signals are present in conversation data. Spotting these patterns in aggregate, across thousands of conversations rather than just the ones that end in a support ticket, gives you a window to act before customers leave.

5. Are there signals of revenue opportunity?

Customers tell your AI what they need. They ask about features they don’t have, products they’re considering, upgrades they’re wondering about. In most deployments, none of that signal reaches anyone on the revenue side.

AI Upsell Signals is the practice of detecting buying intent expressed in customer conversations with AI agents and surfacing those moments so sales or customer success teams can follow up. The conversations are happening. The data is there. It’s a matter of whether anyone is reading it. When a customer expresses interest in a capability they don’t currently have and the agent responds with a generic answer, that revenue signal closes with the conversation.

The logic underneath the framework

The sequence is the same in both contexts: Adoption, then Understanding, then Success, then Business Outcomes. Each question only becomes answerable if you’ve answered the one before it.

You can’t measure task success if you don’t know what tasks people are using the agent for. You can’t connect completions to business impact if you don’t know which completions count as success. You can’t find churn signals in conversation data if you’re not analyzing conversations at all.

The framework is a diagnostic. If you can answer all five questions for your deployment, you have the visibility you need to improve it and defend it. If you can’t, the gap tells you exactly where to focus.

About Nebuly

Nebuly is a user analytics platform built for GenAI and Agentic AI products. It sits between your users and your AI as an invisible analytics layer, reading the conversations that are already happening and surfacing the signals embedded in them.

The reason this is harder than it sounds is that conversational AI doesn’t produce the structured event data that traditional analytics tools are built to read. Nebuly analyzes natural language at scale, extracting topic distribution, user intent, task outcomes, sentiment, and implicit feedback signals, and makes them available as the kind of structured insights the five-question framework above depends on.

For enterprises that have already deployed agents and are now under pressure to prove their value, Nebuly provides the measurement layer that makes that proof possible. If you want to learn more about how this applies to your context, book a demo.