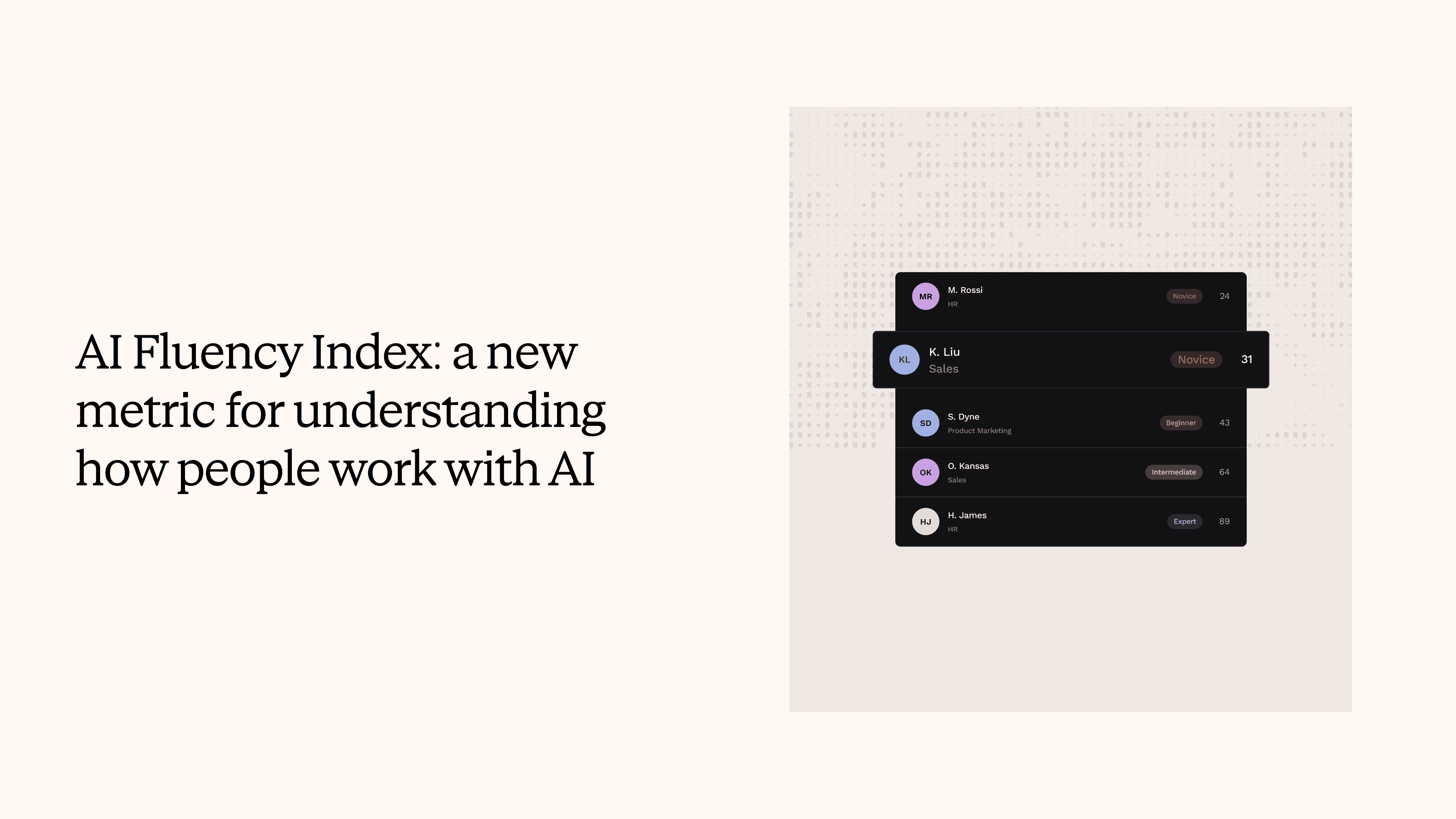

AI Fluency Index: a new metric for understanding how people work with AI

TLDR

- Teams tracking AI adoption already have strong visibility into how many employees use their AI tools. AI Fluency Index adds a new dimension: how effectively they use them. - Fluency is measured through anonymised behavioral signals within AI conversations, what we call implicit feedback, like rephrasing patterns, task completion, and prompt specificity. - This gives HR, L&D, and product teams a new metric to work with: one that connects adoption to productivity and helps target enablement where it will have the most impact. - All data is anonymised and aggregated. No individual employee is identified.

If you're tracking adoption of your internal AI tools, you already know something important: how many people are using them, how often, and in which parts of the organisation. That is valuable data, and having it puts you ahead of most teams deploying AI internally.

AI Fluency Index is a new metric that builds on top of that foundation. Where adoption measures reach, fluency measures effectiveness. It answers a different question: when employees interact with AI tools, how productive are those interactions?

What fluency looks like as a metric

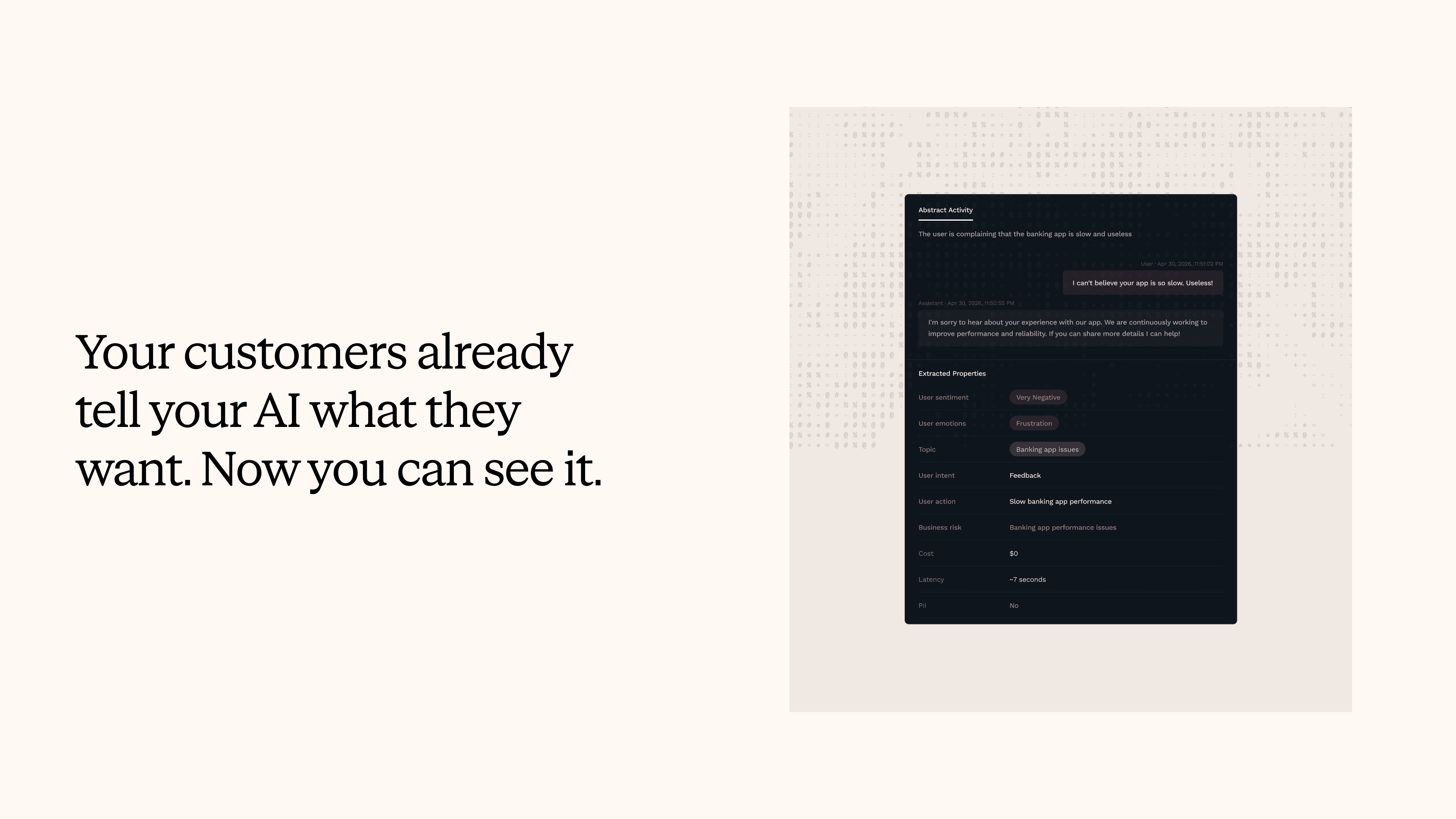

Fluency is based on what we call implicit feedback: behavioral signals within AI conversations that reveal the quality of an interaction, without requiring surveys or self-reporting. Implicit feedback includes patterns like rephrasing (the employee needed multiple attempts to get a useful answer), quick task completion (the tool worked well on the first try), abandonment (the employee gave up), and prompt specificity (the employee knows how to ask for what they need).

These signals are already present in every AI conversation. AI Fluency Index reads them, anonymises them, and turns them into a score that can be viewed by department, by role, by tool, and over time.

All of this is fully anonymised and aggregated. No individual employee is identified, and no conversation is attributed to a specific person. Teams see fluency at the level where it's useful for decisions, not at the level of individual behaviour.

What becomes visible when you can measure fluency

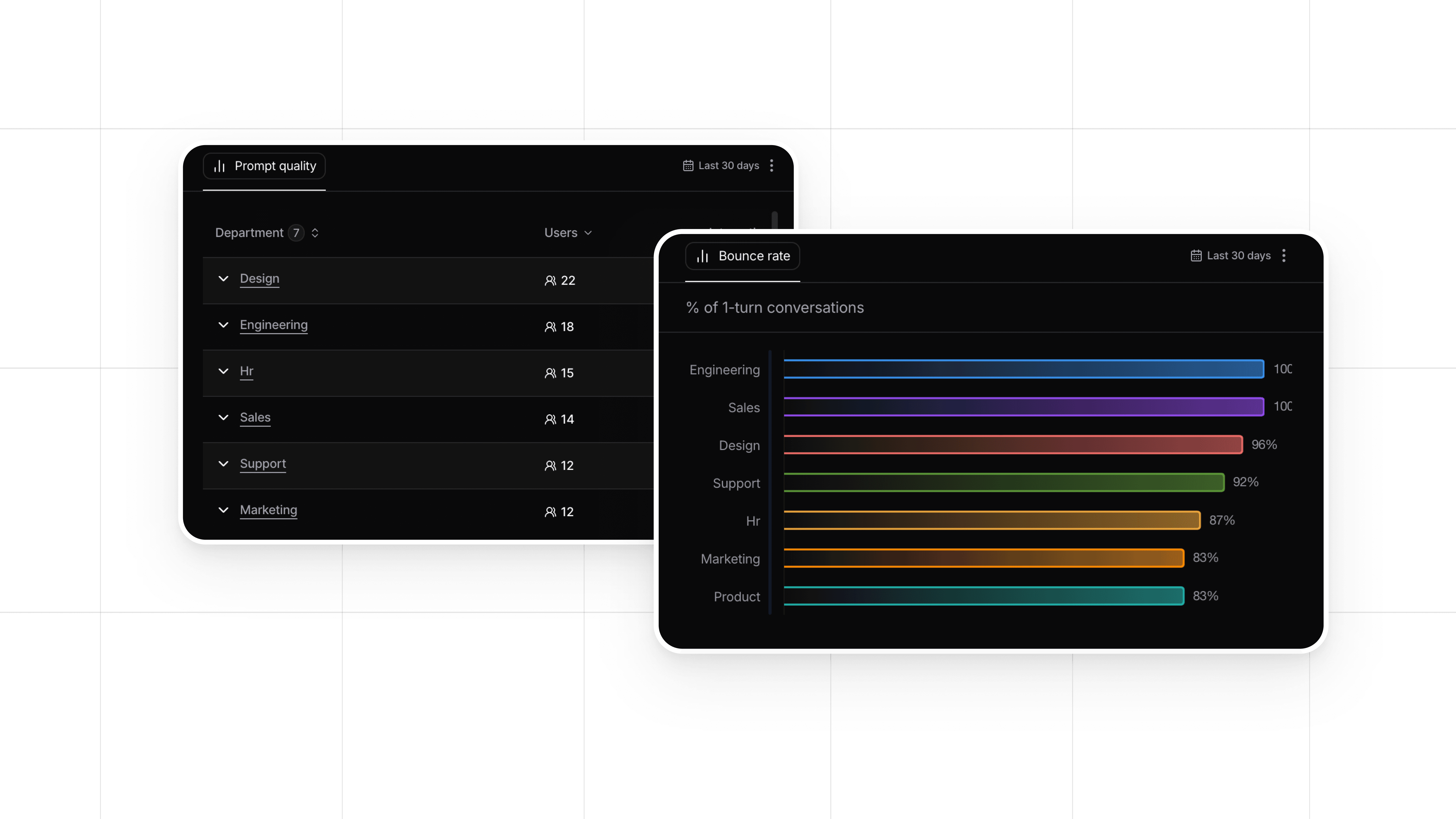

Fluency as a metric opens up questions that adoption alone doesn't cover.

A team with strong adoption and strong fluency is getting real value from its AI tools. That's a signal to invest further, expand use cases, and learn from what's working.

A team with strong adoption and lower fluency is a different kind of signal. It could mean the tool needs better design for that team's specific workflows. It could mean there's an opportunity for targeted enablement. It could mean the AI performs differently across different types of tasks. Each of those is a specific, actionable insight.

Over time, fluency data shows whether enablement programmes are working, whether new AI tools are easier or harder for employees to use, and which teams are maturing fastest. It adds a layer of depth to the adoption data you already have.

A metric for the next phase of AI deployment

Adoption was the right first metric for AI deployment, and it remains important. It answers the essential question of whether people are using the tools you've invested in.

Fluency is the metric for the next set of questions. Are people getting value from those tools? Where is the experience strongest? Where could it be better? What should we invest in next?

AI Fluency Index makes that measurable, through anonymised, privacy-safe behavioral signals that are already present in every AI conversation. No new surveys. No changes to the AI tools themselves. Nebuly connects to existing AI products and reads the implicit feedback employees generate naturally, every time they interact with an AI assistant.

Want to see this in practice? Book a demo.

FAQs

Why can’t I use my existing analytics tools to measure AI agent performance?

How do you measure AI agent adoption across a large enterprise?

What are AI Upsell Signals?

What is an AI Fluency Index?

What are AI Churn Signals?

How do I know if my customer-facing AI agent is actually working?

What metrics should I track for an employee-facing AI copilot?

How do you measure the ROI of an AI agent?

Stay up to date on what we're learning, building, and seeing as enterprise teams deploy and measure AI agents in production.